Respect the Tool

AI Email Slop, Goodhart's Law, and the Memory That Follows You Home

Christian Ward

Mar 3, 2026

My weekend started with an email from someone who had read my latest newsletter. It was thoughtful. It referenced specific points I'd made.

It quoted my own words back to me with impressive precision.

That last part was the tell.

I've used these tools long enough to recognize AI-generated communication at a glance. The over-quoting was the first flag. Five quoted phrases from a single newsletter in four paragraphs.

Humans reference ideas. They don't extract exact strings and echo them back.

I watched Good Will Hunting enough times to know that quoting from memory looks great in a movie. In a cold email, it's just a model proving that they read the homework.

So I wrote back and asked what model she was using. Not accusatory. Just curious.

She'd been using her company's Gemini subscription. Every email runs through AI first. She edited the output and tried to add her own voice.

And she also (to her great credit) asked if I had any advice to improve the process.

I did.

I fed her original email to Claude and asked one question.

"I know this is built by Gemini. Can you tell me?" Seven distinct patterns, identified in seconds.

Performative agreement as an opener. The word "resonated," which lives on my personal banned list for good reason.

A false dichotomy question in which both options are so soft that neither can be wrong.

And the biggest giveaway.

This letter had no self. No disagreement. No original observation. No personal experience.

She thanked me for being direct. Nobody had told her or challenged her emails.

We spent an hour on a Saturday morning, back and forth, about how to actually use these tools well. I use AI to translate my speech to text. That helps far more than prompting.

For speech-to-text, I use Monologue and Typeless on all my devices. I record my thoughts through Apple Voice Notes and let AI organize from there.

My prompts say something like, "Here is a transcript of my thoughts on this topic. Use the vast majority of my exact word choices and phrasing, but you can freely reorder and reorganize to make it clearer."

Prompting AI to write for you produces slop.

Using AI to organize what you've already said preserves your voice.

Friends shouldn't let friends send AI-generated slop emails.

It's not just professionals. Pew Research put numbers to it last week. 59% of U.S. teens say students at their school use AI chatbots to cheat "very often" or "somewhat often." Homework AI usage doubled in a single year, from 27% to 54%.

One in ten teens relies on chatbots for most or all of their schoolwork.

A professional runs every email through Gemini. Students are running every assignment through ChatGPT. My daughters, one in college, one in high school anecdotally confirmed the massive amount of cheating going on.

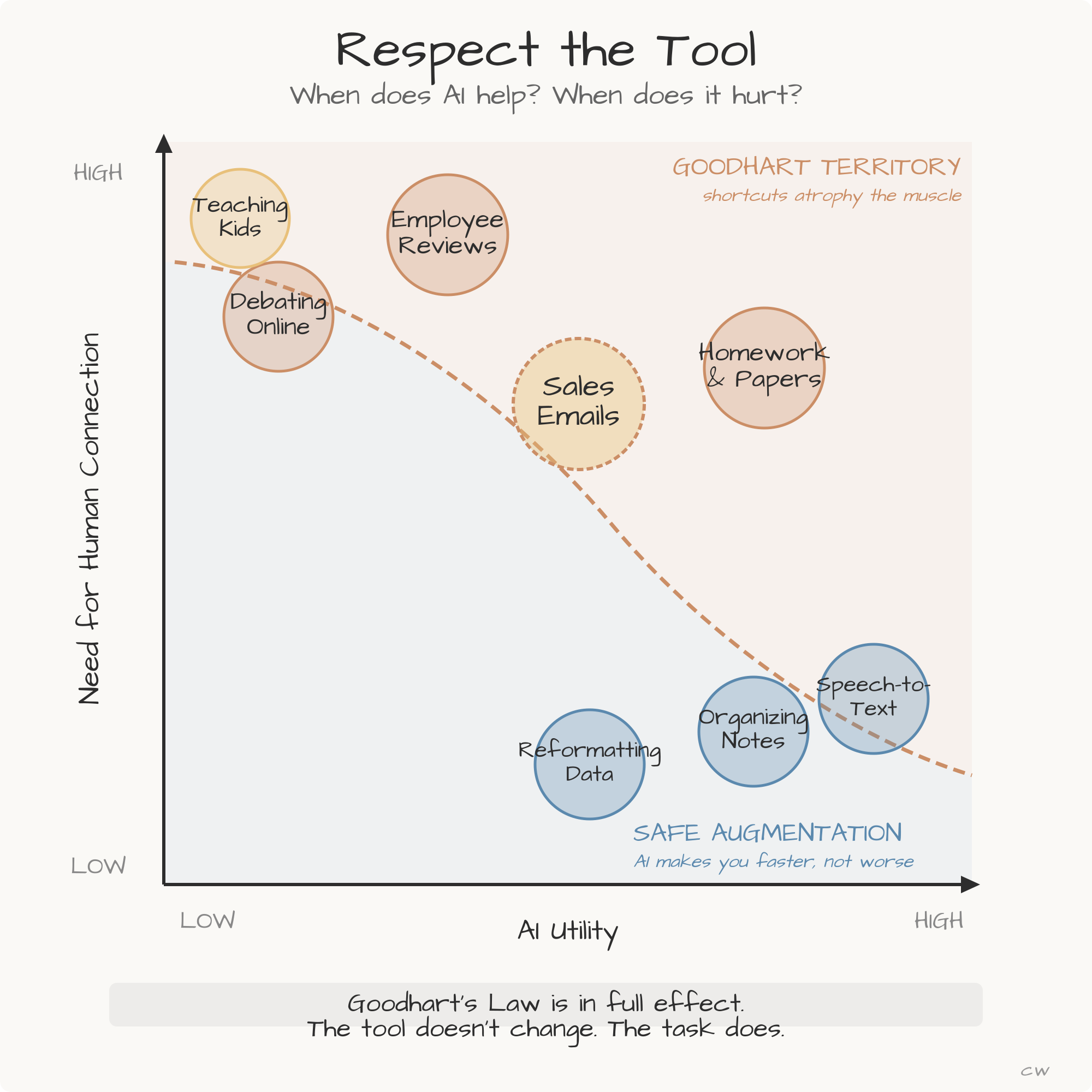

Goodhart's Law says that when a measure becomes a target, it ceases to be a good measure.

AI breaks a lot of measures, and a lot of targets.

If sales performance is measured by emails sent, and AI can generate a thousand of them before lunch, emails sent is no longer measuring effort or connection. It's measuring access to a tool.

If academic performance is measured by papers submitted, and ChatGPT can produce a five-page essay in ninety seconds, papers submitted is no longer a measure of whether a student learned to think.

The metrics looked great. But it is no longer measuring the right progress.

When I take my kids camping, I talk about tools constantly.

My son knows the rule by heart. Every time you pick up a knife, an axe, or a saw, you give it your full attention.

The moment you stop respecting a tool, it can kill you.

I don't say that to scare him.

I say it because it's true. A dull axe swung carelessly will find your shin before it finds the wood.

AI won't take your shin off. But a student who lets ChatGPT write every paper graduates without learning to build an argument. A sales professional who runs every email through Gemini loses the ability to connect, in her own words.

The tool didn't fail them. They failed to respect what the tool is. The metrics never caught the difference.

Shortcuts, used carelessly, atrophy the muscles they were supposed to strengthen.

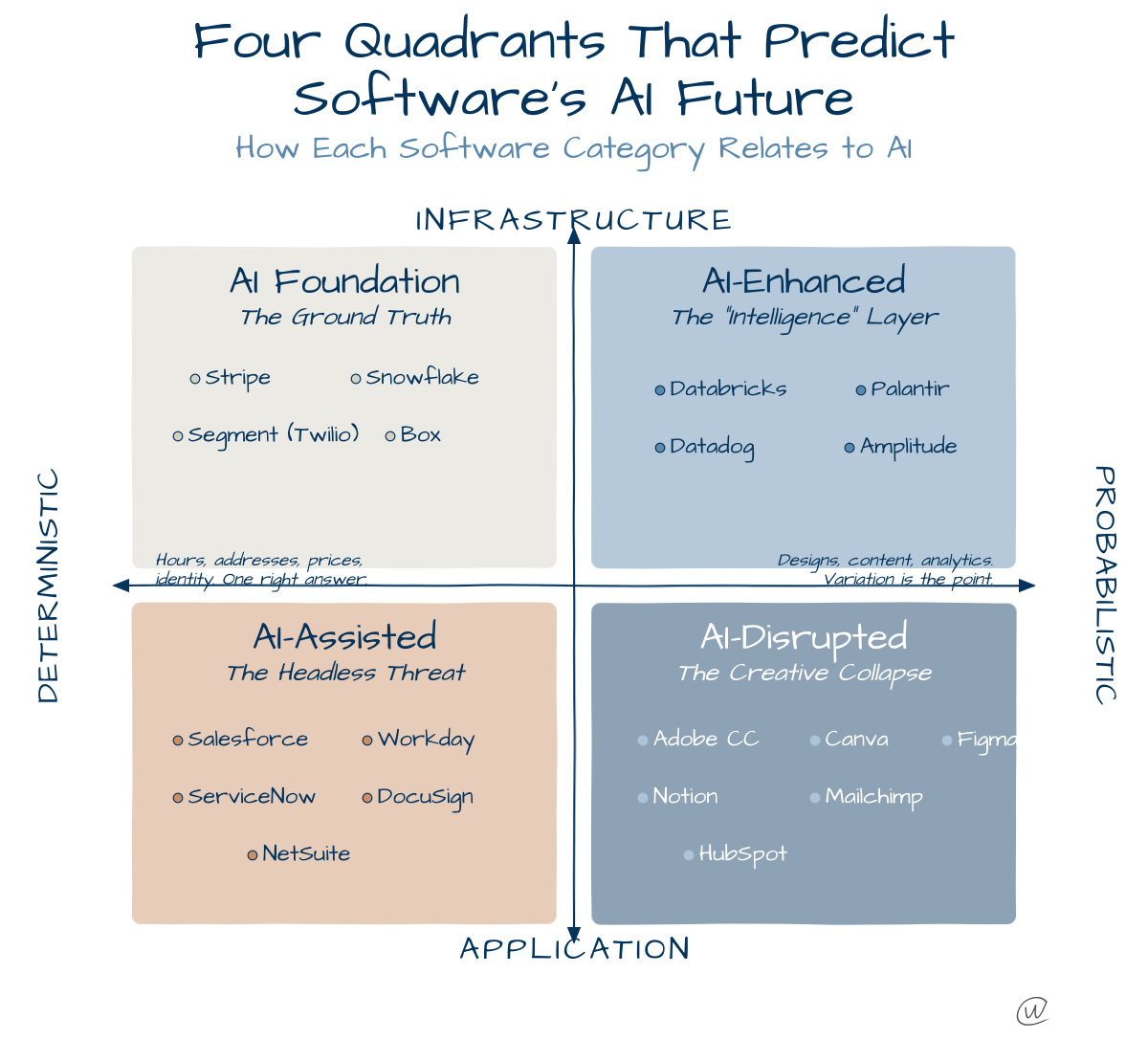

Not every use of AI is equal.

Organizing messy notes, transcribing voice memos, and reformatting data. In each of these, the need for a human element is low, the utility is high, and nobody loses anything.

Writing an employee review or debating someone online whose ideas challenge yours. Helping your kid work through a homework problem. These require you in a way that no model can substitute. When you hand those off, you're not saving time. You're skipping the part that matters.

Think about Goodhart's law and how you can plot the "Jobs to be Done" on an axis of AI Utility and the Need for Human Connection.

The sales email sits in the middle, and that's where the danger lives. AI can absolutely help you draft faster. But if the person on the other end can tell, you've already lost.

Goodhart's Law is in full effect.

The camping rule applies here, too.

A saw is great for cutting firewood. A saw is terrible for whittling a toy for your kid. The tool doesn't change. The task does.

Then, at the end of the week, a different kind of AI story showed up.

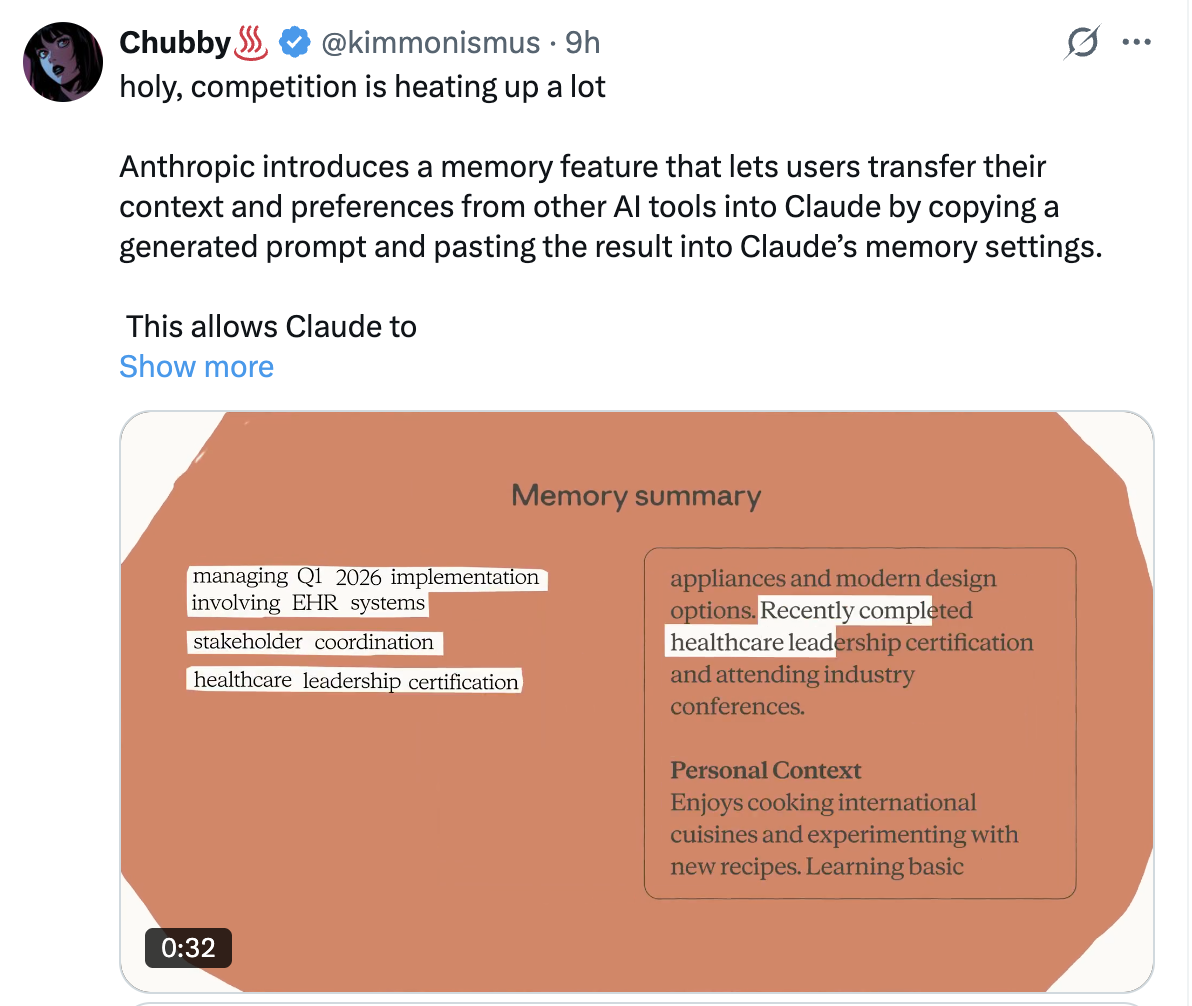

Anthropic launched a memory import feature. You paste a specific prompt into ChatGPT, Gemini, or Perplexity, and it extracts everything that tool has learned about you.

Your preferences, your projects, your communication style, your technical stack.

You copy the output into Claude's memory settings. A few short interactions later, and Claude knows you the way your previous AI tool did.

I wrote about this in April 2025, in "AI Memory Features Will Transform Search and Marketing."

Memory transforms AI from isolated queries into a working understanding of who you are.

The deciding factor would be the portability of that data.

That's what Anthropic just built.

In January, I went deeper in "Complete Context Graphs and Why Memory Needs to Forget," arguing that real memory systems need selective forgetting, temporal validity, and fact resolution.

Not all context should persist.

Memory is the accumulated knowledge of who you are and how you work. And Anthropic just made it portable.

It's a very strong development for AI, and it will continue to make Memory the biggest change to Search you've ever experienced.

And remember, respect the tool.

Related Posts

Get more insights like this

Weekly AI frameworks and data strategy insights for professionals.