The AI Job Fear Mirror

We trust our own context, then forget everyone else has one too

Christian Ward

May 5, 2026

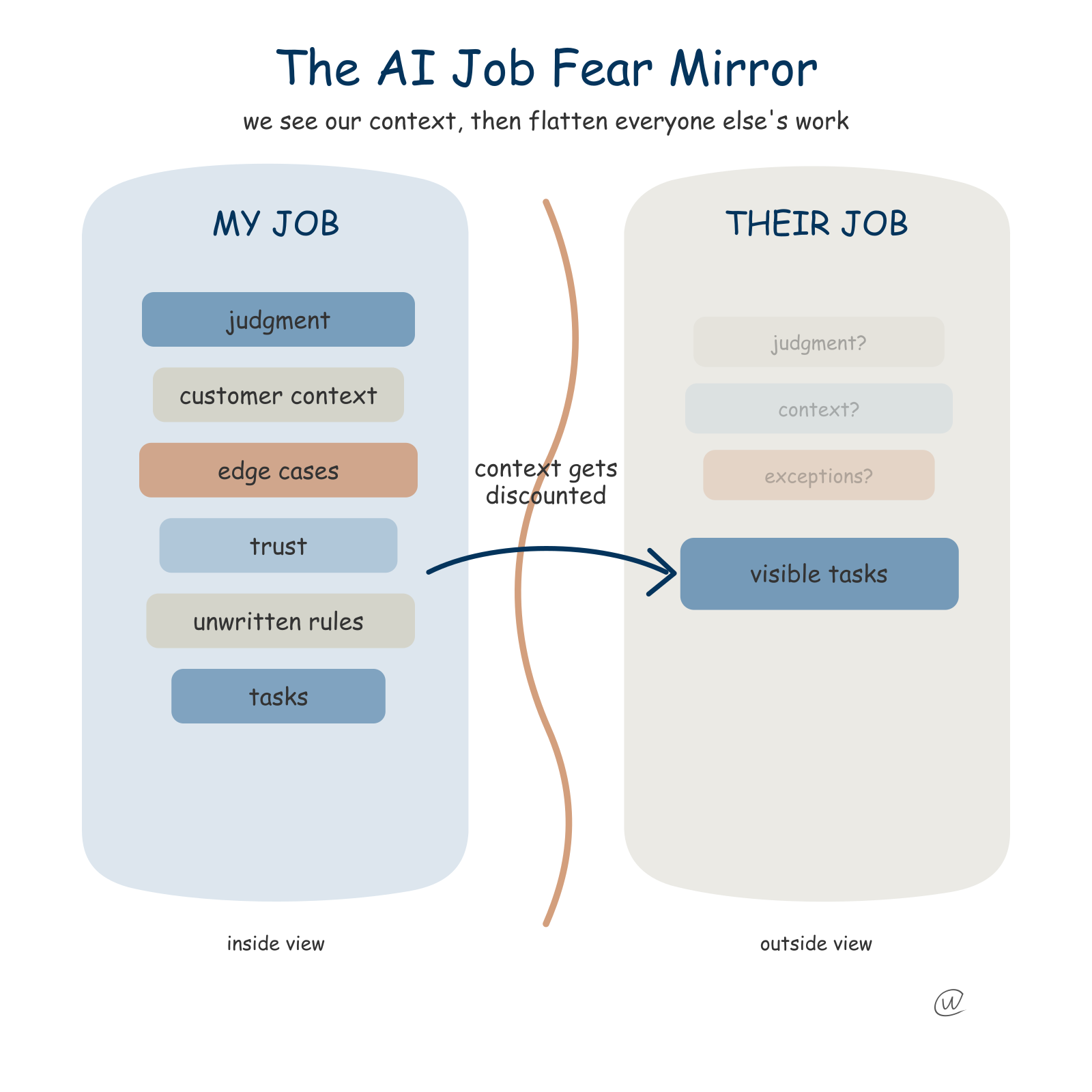

Everyone seems very confident that AI will take someone else's job before it takes their own. Ask them about their own work and the answer changes.

Their job has judgment.

Their job has edge cases, weird customers, unwritten rules, fragile workflows, and that spreadsheet nobody is allowed to touch because Linda made it in 2017 and it still runs payroll.

Then they look across the hallway and say, "Yeah, but AI can do that department."

That feels less like a forecast and more like a cognitive bug.

The Dunning-Kruger effect gets flattened into a joke about dumb people thinking they are smart, but the useful version is about missing the shape of your own ignorance. That is how a lot of AI job fear sounds right now.

Ask a product manager whether ChatGPT, Claude, or Gemini can replace product management and you get a careful answer about judgment, customer nuance, prioritization, and timing.

Ask the same person whether AI can replace legal review, customer support, recruiting, account management, junior engineering, or design research and the answer gets cleaner.

The messy local context disappears.

Aaron Levie had the cleanest version of this on X. He called it a work version of Gell-Mann amnesia.

People use AI in their own job, see the last-mile mess, then look at someone else's job and decide AI will eliminate it.

Michael Crichton used that phrase for seeing coverage get your field wrong, then trusting the same source on fields you do not know. AI gives us a version of that at work.

We see the model fail inside our own domain and understand the failure with painful precision.

Then we turn the page.

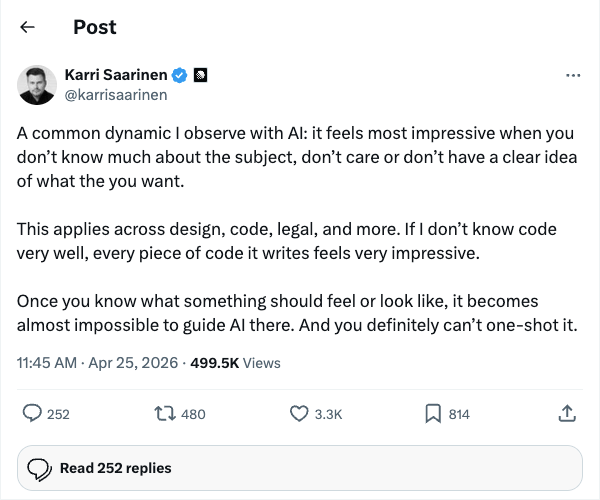

Karri Saarinen made the same point from the other side. AI often looks strongest when your standards are blurriest.

That line should make everyone a little uncomfortable.

If I ask AI to work in a field where I have no taste, the output looks great because I cannot see the gaps. If I ask it to do something in a field where I have taste, the first response is the beginning of the work.

That does not mean the model is useless.

It means the human layer moves from typing the first draft to knowing what the draft is missing.

That is why Lenny Rachitsky's thread about Cat Wu and Claude Code is a useful counterweight. It is not a story about work vanishing.

It is a story about work changing shape.

Anthropic's product timelines compress from months to weeks, sometimes days. PM work shifts from coordinating multi-quarter plans to helping teams ship and learn.

The strongest unit becomes an engineer with product taste.

The future, in Cat Wu's framing through Lenny, looks less like one person being replaced and more like one person managing agents, checking outputs, giving feedback, and improving the system.

That is not comfort. It is a faster treadmill with redesigned handrails.

The replacement story is attractive because it has a crisp shape.

Task exists. Model performs task. Human no longer needed.

A job is not a stack of isolated tasks.

The model may write the first customer email, but it does not know which customer is furious because procurement delayed the renewal. That context can be written down.

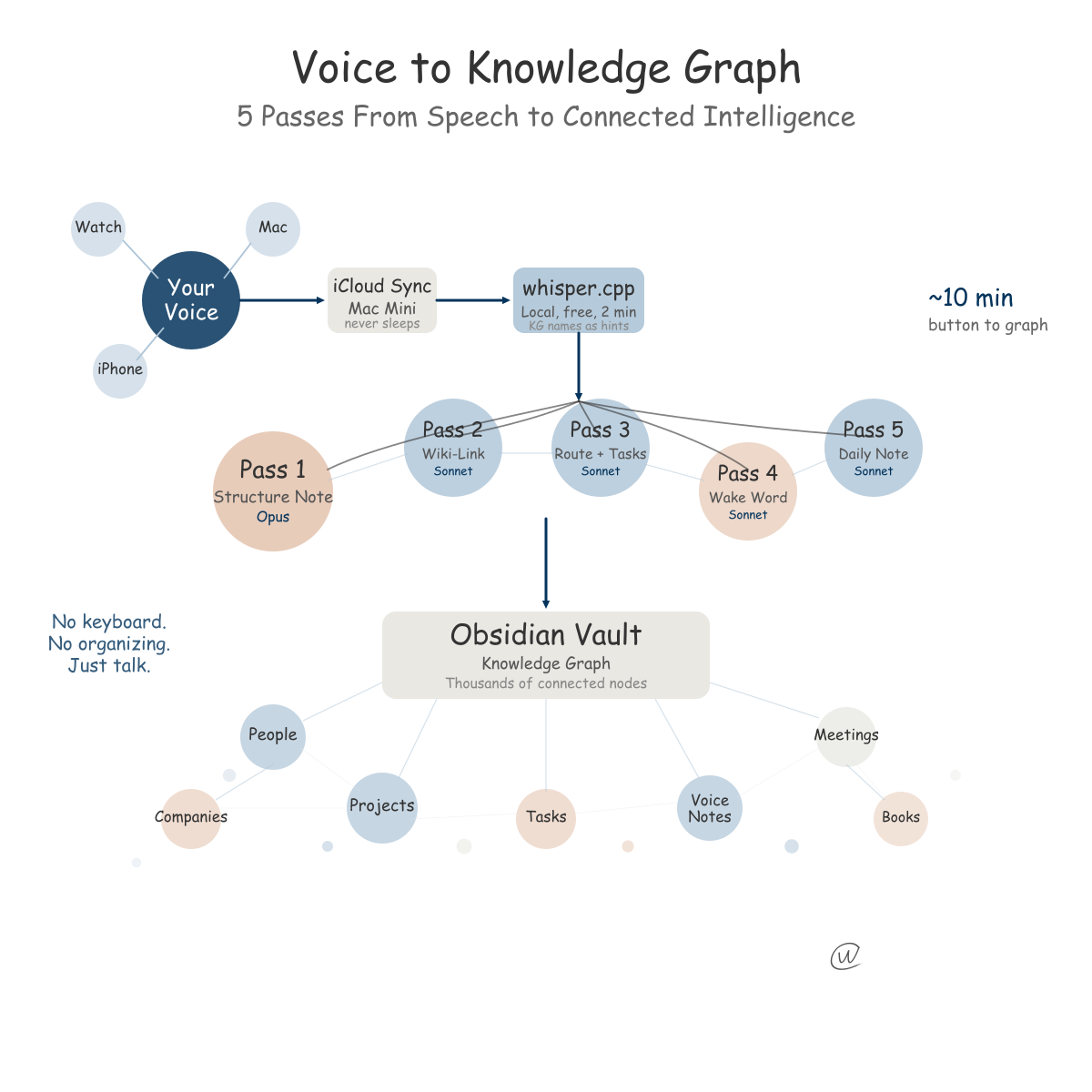

I am building my own version with Obsidian, voice notes, calendar context, knowledge graph entries, and an assistant layer that can retrieve the messy trail behind the work.

The funny part is how much of the useful signal looks useless while I am capturing it. A voice note about a meeting, a weird sentence from a customer call, a half-formed idea while walking, a calendar block that explains why I was annoyed when I wrote something.

None of that looks like "work product" from the outside.

But when Clio pulls the thread back together two weeks later, the answer changes because the model can see the breadcrumbs I forgot I left.

The context does not appear because the model is smart. It appears because the system around the model was built to carry it.

The better fear is that your job becomes easier to inspect, split, route, measure, and compare. That is a different kind of pressure.

It says the vague parts of the job have to become less vague.

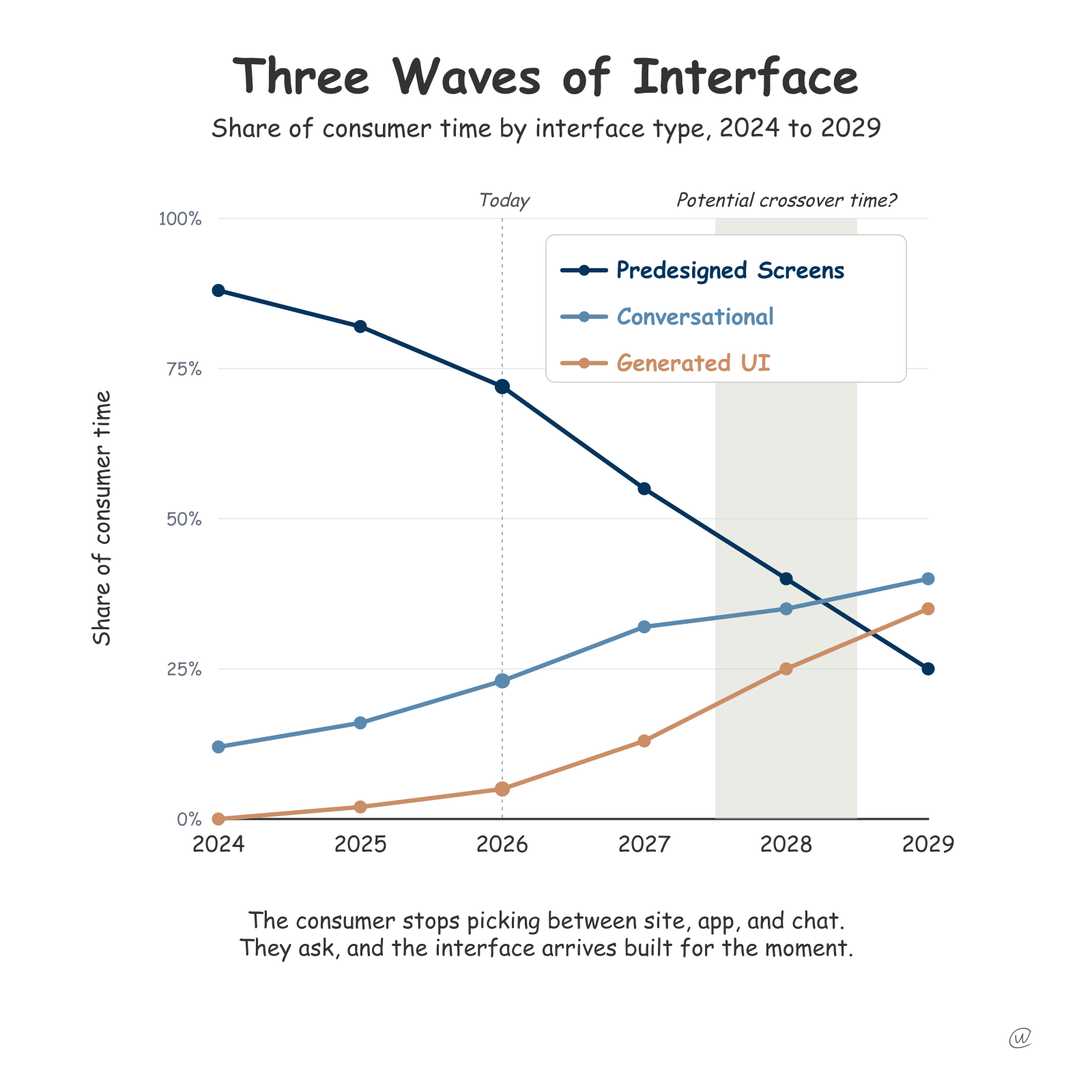

I think that is why the anxiety feels so uneven. People defend their own context because they live inside it, then discount everyone else's because they see the visible output.

Customer support looks like answers. Design looks like screens. Recruiting looks like scheduling. Product looks like tickets and docs. Sales looks like emails and calls.

Reduce a job to visible residue and AI looks closer than it is.

The next few years may punish two groups first.

The first group is people whose work is visible residue because the judgment layer is thin. The second group is people with real judgment who cannot explain or teach that judgment to anyone else.

They may be great at their jobs. But if their advantage lives in private intuition, AI will make the company ask what that intuition is made of.

That is the part I keep coming back to.

The safest job is not the one AI cannot touch. It is the one where the human can show the context, explain the judgment, and use the machine to try more things.

That is the mirror I keep coming back to.

Everyone else's job looks simpler from across the hallway. So does yours.

Make the invisible part visible before someone mistakes your work for the part they can see.

Related Posts

Get more insights like this

Weekly AI frameworks and data strategy insights for professionals.