AI Memories Need an Expiration Date

What 20 Years of Data Decay Should Teach AI About Personalization

Christian Ward

Mar 31, 2026

Hollywood loves to glorify the superhuman brain. The person who remembers everything is the hero. The list is long:

Good Will Hunting — Will Hunting, solves impossible math problems

Rain Man — Raymond Babbitt, counts cards and recalls phone books

A Beautiful Mind — John Nash, sees patterns in chaos

Limitless — Eddie Morra, unlocks 100% of his brain

Lucy — Lucy, gains total cognitive control

The Bourne Identity — Jason Bourne, perfect combat recall with no memory of who he is

Phenomenon — George Malley, absorbs entire languages and textbooks overnight

But the movies always get more complicated.

The gift starts breaking things.

Will Hunting can solve any equation but can't hold a relationship together.

John Nash in the movie sees patterns nobody else can, and his brain generates perfect delusions that it can't distinguish from real ones. Which, by the way, is not exactly how the actual John Nash was reported to experience them.

Lucy gains total recall and loses her humanity in the process.

That's the arc almost every time. Perfect memory sounds like a superpower until you live with it.

In reality, it would drive most of us nuts.

Now imagine something in your pocket that remembers every conversation, every place you go, and every product you buy.

We already have a rough version of this in what Google can see about us. But we are about to use AI agents in a much more aggressive way, and those agents are going to remember everything.

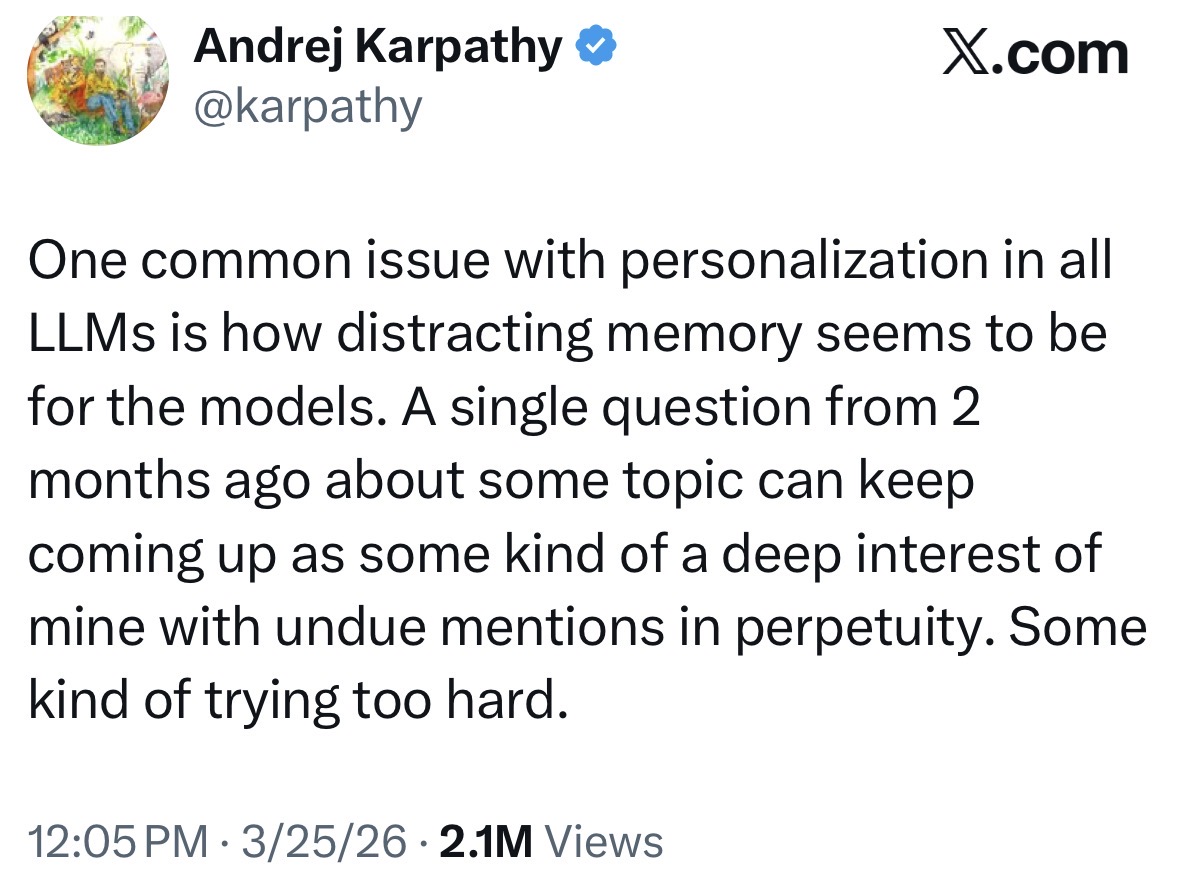

Andrej Karpathy posted a tweet this week that hit 2.1 million views in a couple of hours. His reach is massive, but this was a nuanced take on LLM memory, not a hot take or a product launch.

That many people engaged because, well, I think we all recognize the problem.

You mentioned looking for sushi once, three months ago, because the person sitting next to you wanted a recommendation.

Now your trusted AI thinks you're a sushi lover.

Every suggestion gets filtered through a single data point that was never really about you.

I have the same problem with Audible.

My account has my kids' summer reading, my wife's book club picks, my theology books, my son's Percy Jacksons, and my AI research all jumbled together.

The recommendation engine has decided I need a teenage vampire series set in ancient Greece with a subplot about the AI at the Council of Nicaea.

Nobody has written that book.

But if they do, I have serious concerns about the target audience.

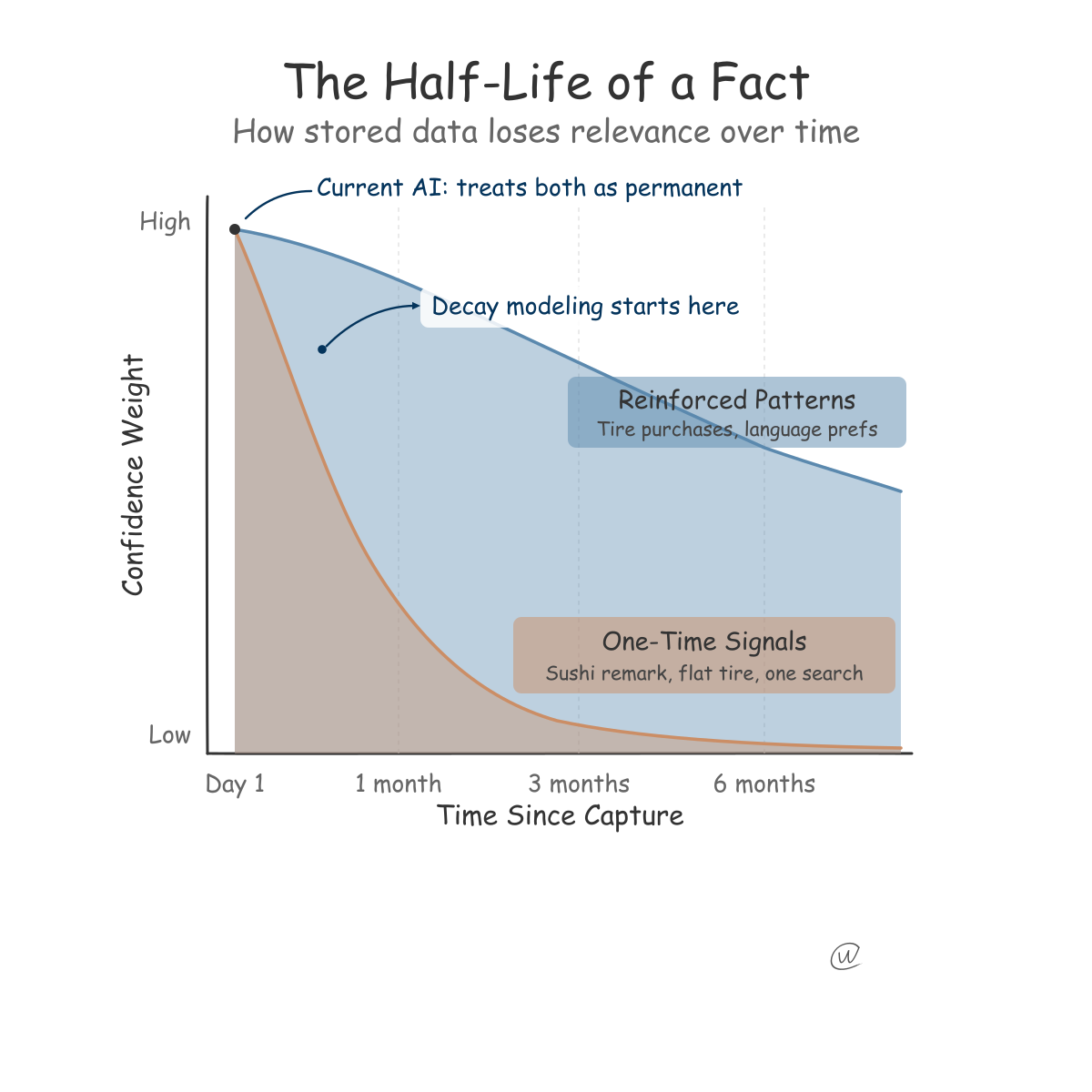

At my job with Yext, I often modeled data decay, which is the measurable degradation of information accuracy over time. This was also a big part of data accuracy work at Thomson Reuters decades ago.

The moment you capture any piece of business data, it becomes less relevant.

We modeled half-lives by category and industry because rates vary across them.

Half-lives by category

A plumber's service list might stay accurate for 18 months. A restaurant's menu could be wrong within two weeks of capture. And the soup du jour? Well, it's the soup of the day. Inventory changes in real time.

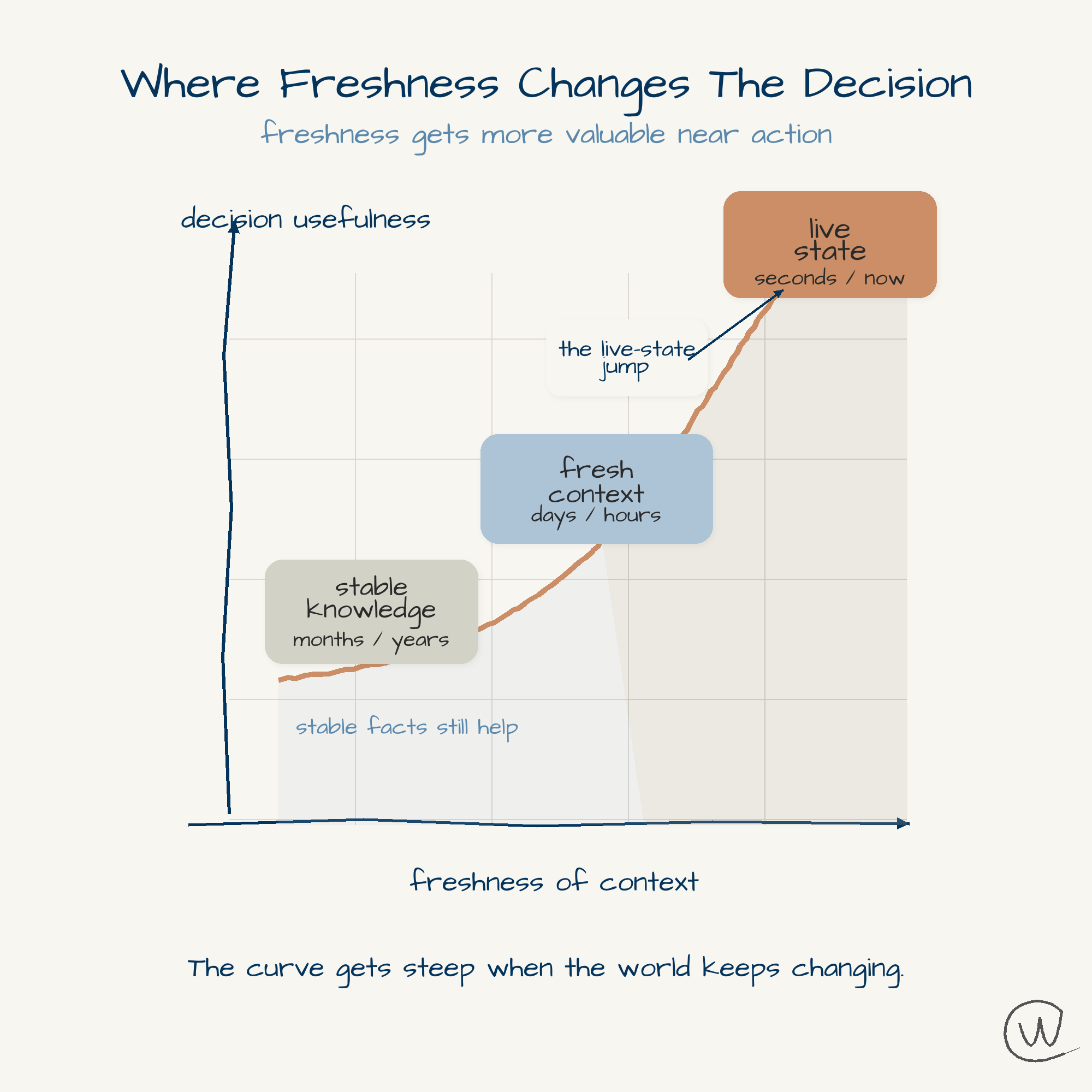

This framework applies to how AI stores what it learns about you.

Nobody in the major labs seems to be building for it.

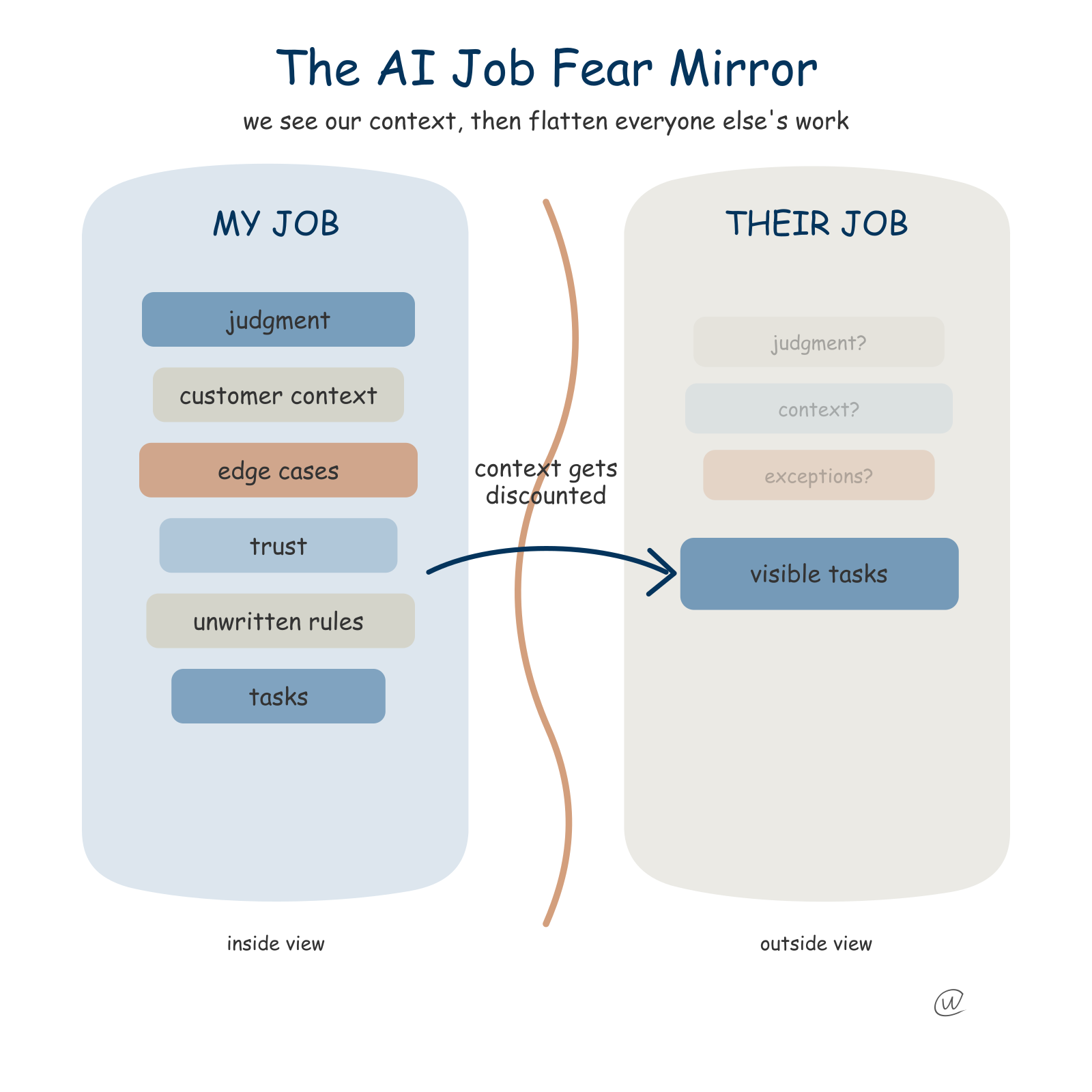

What Karpathy is pointing out is something I've been studying, because memory is one of my biggest interests in this space — something I first wrote about in AI Memory Features Will Transform Search and Marketing. The current models struggle with two specific problems:

Overweighting memories — treating all memories as equal in importance

Cross-pollination across genres — where health data and finance data bleed into unrelated choices

There are more issues, but those are two of the most obvious.

Our own memories have evolved to be phenomenal at understanding the context in which they get called up.

This happens so well that you only notice it when a memory jumps into your mind and seems out of place (context).

It is like being hit by a familiar childhood smell, and a memory returns out of nowhere. The reason that feels so jarring is that it almost never happens that way.

When ChatGPT or Gemini stores a fact about you, it treats that fact as permanent.

No weighting mechanism that says "this was captured six months ago and should carry less confidence," no category model that recognizes food preferences decay faster than language preferences, and no signal architecture that distinguishes between an offhand remark and a reinforced pattern.

Think about Jason Bourne for a second. He has this incredible tactical memory. He can disarm anyone, speak six languages, and read a room in two seconds.

But he has no idea how he acquired any of it.

He can't see his own inventory. He can't choose what to keep or what to let go. The memory both fails and saves him.

That is where AI memory is right now.

ChatGPT stores facts about you.

Go look right now. Open Settings, then Personalization, then Memory. It's a flat list of disconnected facts with no weighting, no context, no sense of what's current and what's stale.

And it's STILL AMAZING as an advancement. It's just early.

"Those who forget the past are doomed to repeat it." Everyone knows that line.

But here's my twisted version for AI: if you can't forget the past, you're doomed to live in it.

The human brain figured this out a long time ago.

We forget on purpose. We let the sting of an argument fade so we can stay married.

We let the details of a bad meal blur, so we go back to a restaurant we otherwise love. We soften the edges of grief so we can function.

Forgetting is not a bug in human memory.

It is the feature that makes the rest of it work.

I don't think these systems will ever ask for explicit permission before storing data in memory.

That's not how the UX is going to evolve. ChatGPT is already storing memories without being asked, and that genie is not going back into the bottle. I think that's the right design choice, by the way.

What I do think will happen is that the memory layer becomes visible.

Instead of a flat list of facts buried in a settings menu, you'll get something closer to a map. A holistic view of what the AI thinks it knows about you, organized by category, weighted by confidence, and tagged with timestamps.

You should be able to ask: "What do you know about me?"

And the answer should not be a wall of text. It should be a picture you can understand in five seconds.

The AI should answer, "Here's what I'm confident about, here's what's fading, here's what I flagged as a one-time event."

The system should also check in from time to time. Not in an annoying "Are you still watching?" way.

More like: "You mentioned you were training for a marathon six months ago. Is that still part of your life, or should I let that go?"...(loser.)

The next generation of personalization won't be defined by how much a system can store. Right now, that is a serious limitation.

Soon, the memory will be defined by how well it can let go.

The data decay models have been developed over two decades of business data management. I see the hardware, the compression, and the data-decay algorithms coming together very soon.

This week, Google Research just released TurboQuant, a compression algorithm that reduces LLM key-value cache memory by 6x while achieving 8x speedup with zero accuracy loss.

The memory layer is getting faster, cheaper, and more efficient every month.

The missing piece isn't storage capacity. It's storage intelligence.

Check what your AI has stored about you.

Open the memory settings in ChatGPT, Gemini, or any platform that claims to "remember" you. You will be surprised by what it thinks it knows.

Also, tell your AI when it's using the memory incorrectly.

I don't know if they are ready for that, but it is worth documenting the response and behavior.

I think Memory is the most insane opportunity in actual human adoption and benefit. It could also be the thing that sets off massive resistance if done wrong.

But as I said before. It's early.

Related Posts

Get more insights like this

Weekly AI frameworks and data strategy insights for professionals.