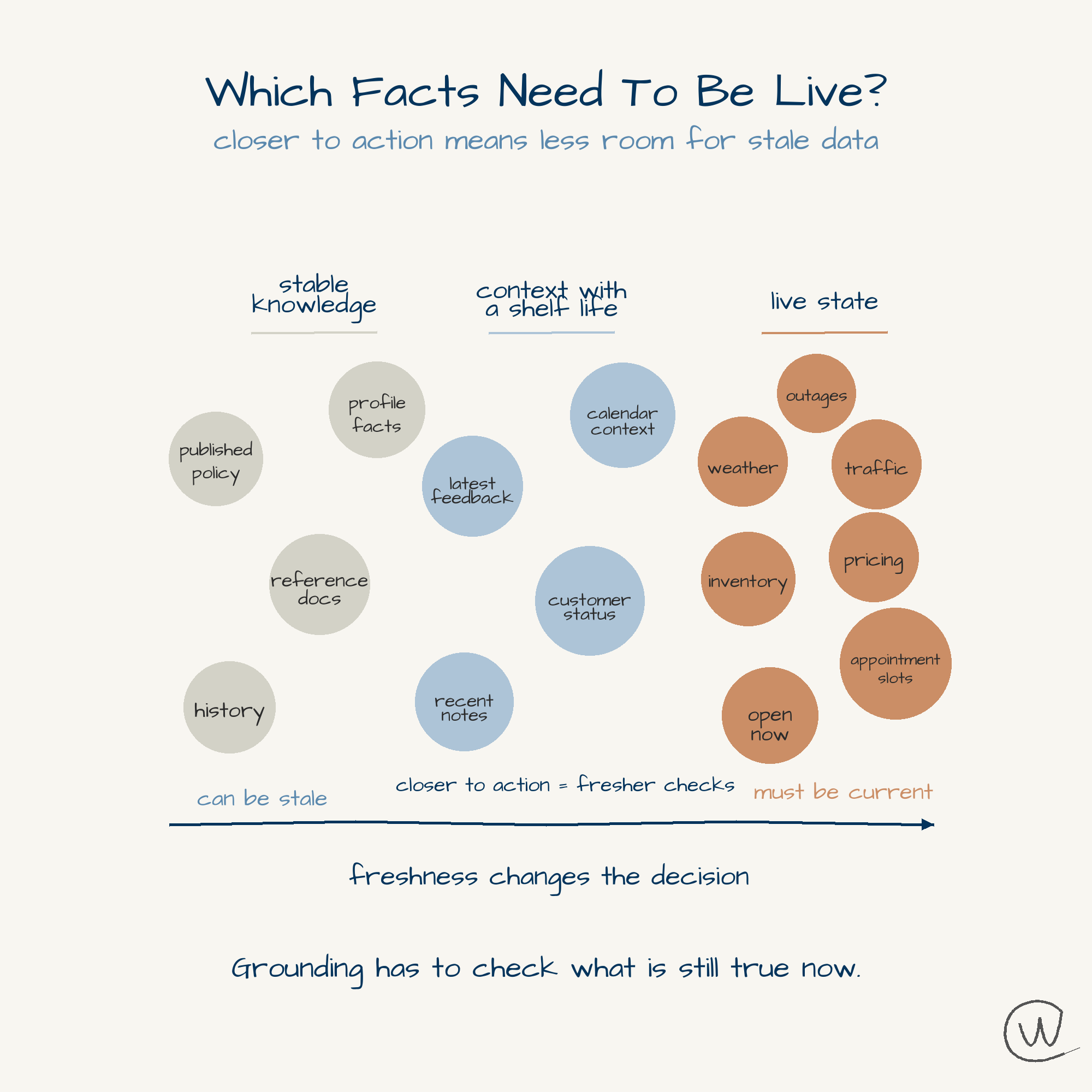

Grounding Needs To Know When Facts Expire

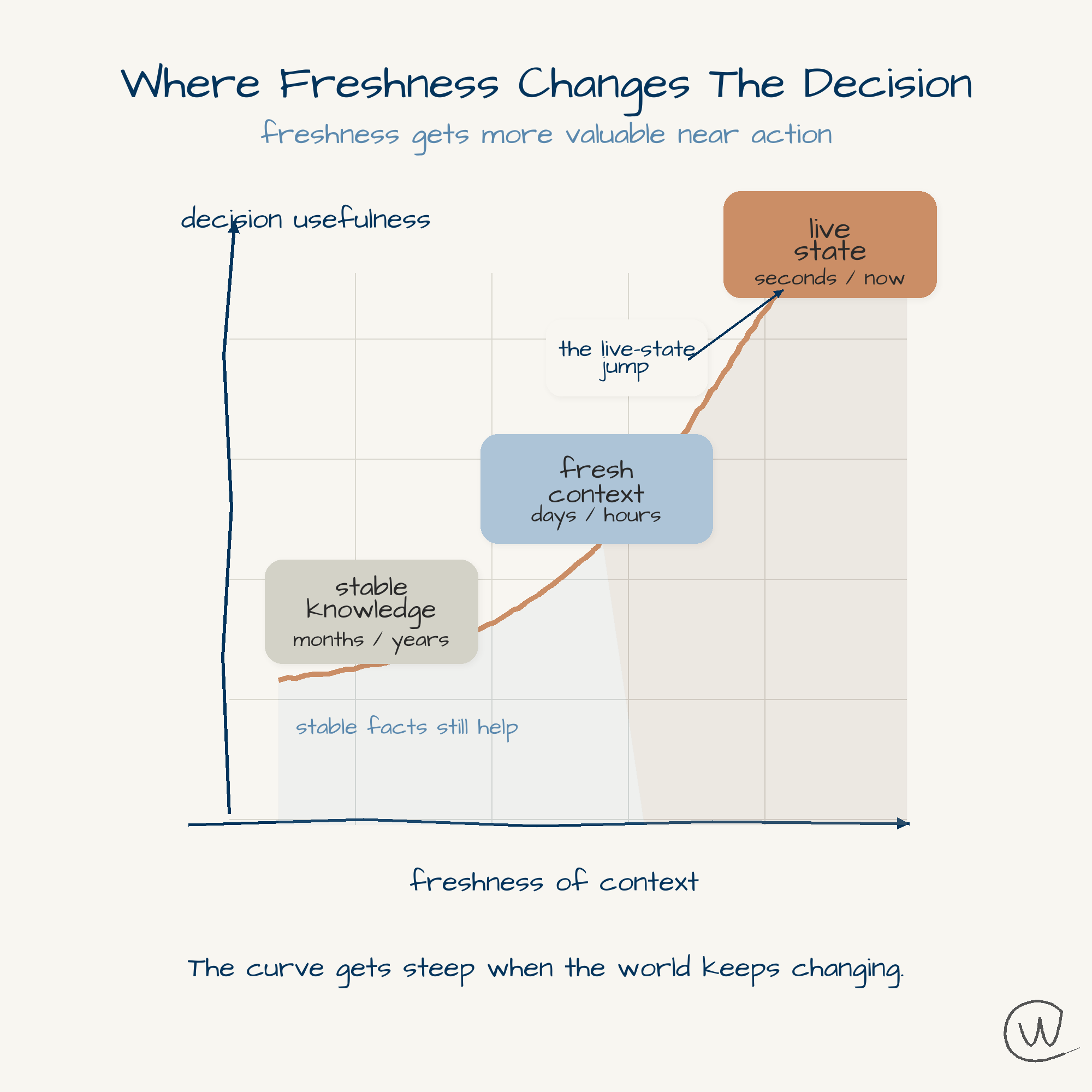

Stable knowledge, context, and live state do not decay at the same speed.

Christian Ward

May 11, 2026

Most AI grounding conversations stop at evidence.

Did the model retrieve a source? Did the source look credible? Did a few pages agree?

Those are useful questions, but they leave out time.

A sourced page can still be stale by the time someone acts.

A citation can tell the model where a fact came from. It cannot tell the model whether that fact is still alive.

The difference shows up once AI starts acting on the answer instead of handing you links to inspect.

Some facts age slowly. Some depend on the person asking. Some are gone by the time you click.

Treat those the same and the system sounds smart right up until someone tries to use it.

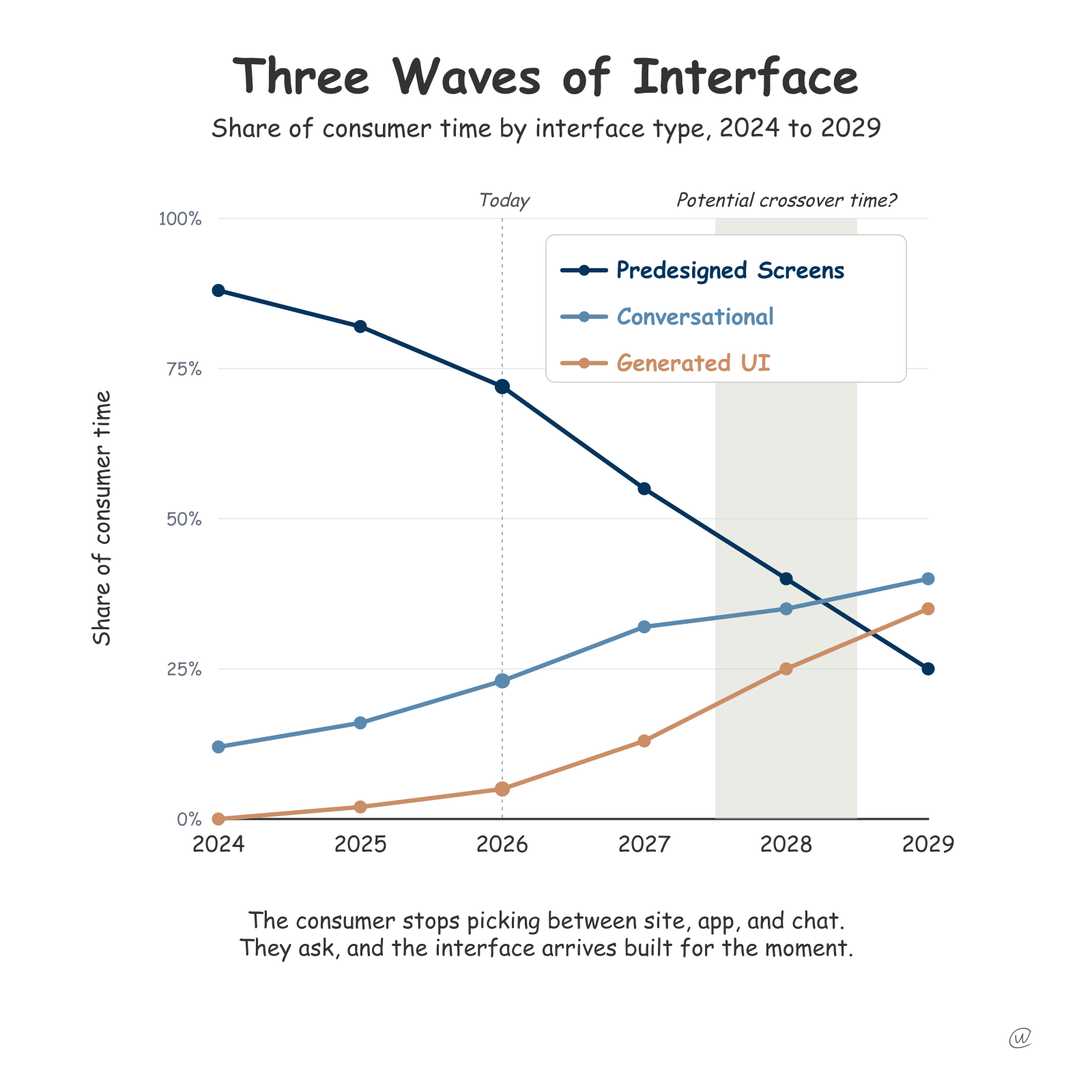

Search was built for a world where the human did the last mile.

A search engine could show ten links. You decided which one looked current enough. If the first result felt stale, you checked another.

The index did not have to be a live sensor for reality. It had to help you find something worth reading.

Krishna Madhavan’s Microsoft Bing post, Evolving Role Of The Index, puts useful language around this shift.

Once the system summarizes, recommends, books, buys, routes, or drafts the answer, the user stops inspecting links and starts relying on the answer.

Freshness moves inside the product.

Stable knowledge is where AI already feels strongest.

The Roman Empire. TCP/IP. A company history. A published framework.

These facts still need sources, but they do not usually punish the user for being a few days old.

Context belongs to the person, the business, or the situation.

I see this at work all the time. Product moves quickly, and the latest spec, customer feedback, or internal decision does not always make it into the place the AI is reading fast enough.

That does not make grounding bad. It makes grounding incomplete unless the system can reach the freshest operational context.

A model can explain renewal risk or plan a vacation in general. It only gets useful when the specific details made it into memory: the delayed procurement paperwork, the kid who gets carsick, the spouse who hates late flights.

But context is not permanent truth.

A sushi recommendation from three months ago may not mean you love sushi. It may mean the person next to you wanted lunch.

Context needs weight, source, and expiration.

Otherwise personalization turns into a rumor about you.

Live state is the version of the world someone needs at the moment of action.

Hours. Inventory. Pricing. Appointment slots. Road closures. Local conditions.

I have seen this recently with healthcare appointments. A system sends an opening because an earlier slot might be available, but by the time you tap through, the appointment is already gone.

Nobody lied. The information just expired faster than the experience could handle.

The same pattern shows up in ordinary life. If you ask whether it is a good time to take the boat out fishing, the answer cannot be based on a general weather page from this morning. It needs current wind, storms, tide, and local conditions.

Being wrong is not just inconvenient.

It can put you in a squall.

Live state is reality on a timer.

The financial version makes this obvious.

Nikita Bier’s X post about Cashtags bringing real-time financial data into the timeline is not just a finance feature. It is a clue about where answer layers are going.

If people act on what they read in a feed, the feed cannot only be conversation. It starts to carry live state.

Augusta National makes the same point in a quieter way. No phones, no live location, no quick update. You feel the old version of coordination come back fast: Where are you? Are you still there? Did the plan change?

When I was a kid, if you wanted to know whether someone was around, you rode your bike over. If they were not home, that was the answer. AI products are about to create a strange version of that problem. They will sound like they know where everything is, but action still depends on the current state of the world.

The next version of grounding depends on the operating layer underneath the answer.

Businesses that still treat their website as a brochure will feel this first.

The page can be well written and still lose the moment because the AI cannot verify what the customer needs: Are you open? Is the item available? Can I book it? Is the price still valid?

Those facts need to be callable, current, and consistent across the places machines check.

This is one of the reasons I love the work I get to do. Yext has been built around facts that stay current since I joined in 2013.

But it was the right bet.

As more AI experiences move from answering to acting, the expensive, unglamorous work of keeping facts current starts to look a lot less optional.

I would separate the data before trying to improve the AI layer.

Stable knowledge can live in documents, pages, policies, and reference material.

Context belongs in memory systems that understand source, confidence, and decay.

Live state needs feeds, APIs, verified profiles, or operational systems that can answer in the moment.

Grounding is not finished at retrieval. It is finished when the product knows what kind of data it has, how fast that data decays, and whether it is safe to act on.

Search helped people decide what to read.

AI has to help people decide what is still true.

Related Posts

Get more insights like this

Weekly AI frameworks and data strategy insights for professionals.